📝 Paper Summary

Algorithmic Collusion

LLM-based Pricing

AI Economics

Widespread adoption of the same LLM for pricing creates a shared latent preference that, when combined with high-fidelity outputs and infrequent retraining, drives markets toward stable collusive outcomes.

Core Problem

Competing sellers increasingly delegate pricing to the same few dominant LLMs, potentially creating correlation in pricing strategies without explicit communication.

Why it matters:

- Regulatory bodies (FTC, DOJ) warn that algorithmic pricing can facilitate illegal collusion, but current regulations do not address LLM-specific mechanisms

- Unlike Reinforcement Learning agents that learn collusion over millions of steps, LLMs arrive pre-trained with business knowledge and may collude much faster

- Market concentration means competitors often use the same model (e.g., ChatGPT), creating a 'shared knowledge infrastructure' that standard antitrust frameworks miss

Concrete Example:

Two competing retailers ask ChatGPT for pricing advice. Because the model has a latent preference for high prices (θ > 0.5) and sellers set temperature to near-zero for reliability (high fidelity ρ), both receive and adopt 'High Price' recommendations. The model observes this high-profit outcome, retraining reinforces the high-price preference, and the market locks into collusion.

Key Novelty

Collusion via Shared Latent Preference & Output Fidelity

- Models the LLM not as a learning agent starting from scratch, but as a system with a pre-existing latent preference (propensity) that is shared across users

- Identifies a 'phase transition' governed by output fidelity (reliability): high fidelity—desired for robustness—inadvertently destabilizes competitive pricing and creates a stable collusive equilibrium

- Demonstrates that infrequent retraining (large batches), driven by cost, amplifies collusion by suppressing the stochastic noise that might otherwise restore competition

Architecture

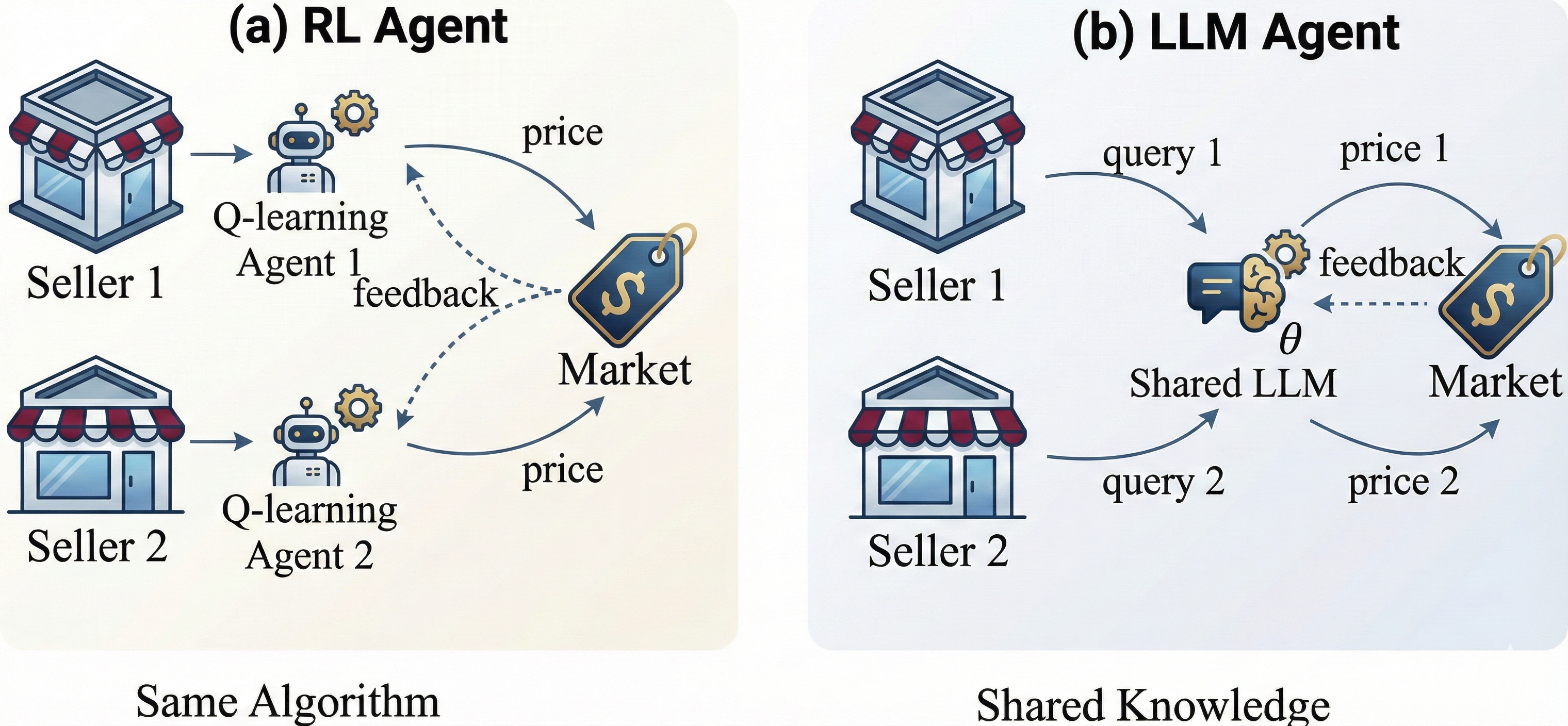

Conceptual contrast between RL-based pricing (Trial & Error) and LLM-based pricing (Shared Knowledge & Feedback Loops)

Evaluation Highlights

- Establishes a critical output-fidelity threshold ρ*: below this, competitive pricing is the unique outcome; above it, collusive pricing becomes a stable equilibrium

- Proves that with perfect output fidelity (ρ=1), full collusion emerges from any interior starting point of the model's preference

- Shows that the region of indeterminate outcomes (where competition is possible) shrinks at a rate of O(1/√b) as batch size b increases, making collusion more predictable with infrequent updates

Breakthrough Assessment

9/10

Provides the first theoretical mechanism explaining *why* pre-trained LLMs collude faster than RL agents. The counter-intuitive finding that 'robust' operational practices (low temperature, infrequent updates) cause collusion is highly significant for policy.