📝 Paper Summary

General-Sum Games

Equilibrium Selection

NePPO stabilizes general-sum multi-agent learning by training a shared potential function whose cooperative equilibrium provably approximates the original game's Nash equilibrium, converting competitive dynamics into a cooperative proxy.

Core Problem

Training MARL in general-sum games is unstable because standard algorithms only guarantee convergence in zero-sum or fully cooperative settings, failing when agents have heterogeneous, conflicting preferences.

Why it matters:

- Real-world systems (autonomous driving, logistics) involve mixed cooperative-competitive interactions where simple cooperative assumptions fail

- Current methods like MAPPO and MADDPG lack principled objectives for general-sum games, leading to cycling or chaotic learning dynamics

- Nash equilibria in these settings are often non-unique, requiring a mechanism for selecting efficient equilibria rather than just any stable point

Concrete Example:

In a mixed-motive scenario like dynamic pricing or autonomous racing, one agent maximizing its reward might destabilize others. Standard self-play might cycle indefinitely between strategies without converging, whereas NePPO learns a shared 'potential' signal that aligns these conflicting gradients locally.

Key Novelty

Near-Potential Policy Optimization (NePPO)

- Learns a player-independent 'potential function' (MNPF) such that maximizing this single function cooperatively yields a policy profile that is an approximate Nash equilibrium of the original competitive game

- Minimizes a novel 'local discrepancy' objective that measures the gap between the potential function's gradients and real agent utility gradients specifically at the equilibrium point, rather than globally across all policies

Architecture

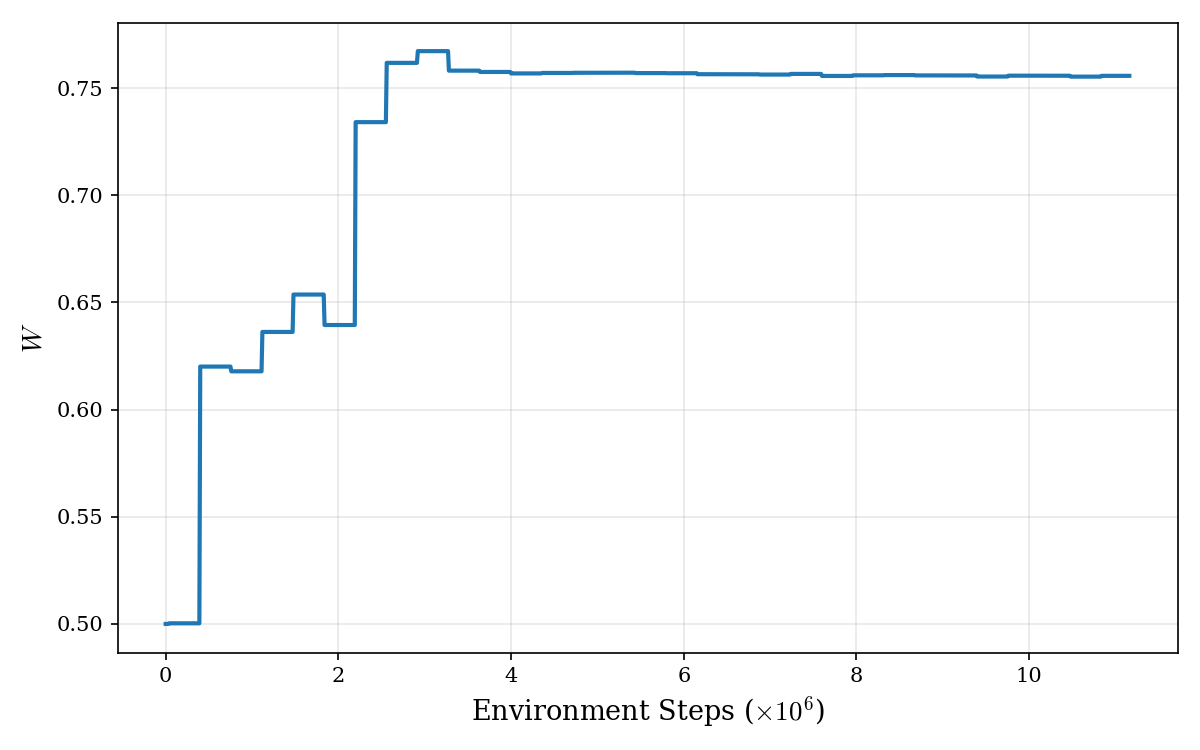

The iterative training loop of NePPO

Breakthrough Assessment

7/10

The theoretical framework bridging cooperative and general-sum games via learned potential functions is elegant and addresses a major stability gap in MARL. However, reliance on zeroth-order optimization may scale poorly.